How to come to terms with Leon Brillouin’s theorem that no information can be acquired without paying a “price,” how to come to terms with the negentropic nature of information?

How to come to terms with Leon Brillouin’s theorem that no information can be acquired without paying a “price,” how to come to terms with the negentropic nature of information?

The notion of »the object« reveals itself currently in a novel manner that not only produces powerful pragmatic tactics and protocols (e.g. parametrics, agent-based modeling) as well as the aesthetics of a »geometry of the colossal« (Peter Sloterdijk), but it also enriches and complicates the philosophical spectrum of how possibilities and necessities can be reasoned, established, augmented, preserved or exploited. The notion of »the object« occupies a central position in a younger generation of contemporary philosophical thinkers, who seek to situate the role of speculation and imagination in reasoning from within the relation »the object« maintains to its »computability«.

This lecture class introduces to some of the positions in these emerging discourses. We will trace a few invariant themes that currently resurface, and we will examine how they do so in novel and interesting manner. Thereby, we will pursue the following vector of interest: Are there novel aspects and interesting framings to be found in these discourses for developing a »speculative architectonics«? And how would such an architectonics relate to the double-interest formulated by a »verallgemeinerte Baukultur« (Expanded Culture of Building), namely to expand architecture beyond its disciplinary confines on the one hand, while attending to its local situatedness on the other?

COURSE MATERIALS // The positions that will be discussed include Michel Serres’ philosophy of what he calls “the transcendental objective”, as well as a broad range of thinkers and writers that group around labels like speculative realisms, new materialisms, accelerationalism, object-oriented-design, flat ontology, speculative poetics. Much of this discourse takes place online, so there are plenty of podcasts as well as texts to connect the students directly with »the sources«. Material for weekly preparation will be suggested and it is highly recommended to work through them. However, it is not mandatory for the participation in this course and it will not be part of the final exam.

FINAL EXAM // In the last meeting, the students are asked to write a short essay (1-2 pages), in which they portray and discuss one particular line of thinking from what has been discussed throughout the semester (of their own choice).

pdf of the book “The Speculative Turn” is available here at re-press

pdf of the book “Parasite” is available here: : The Parasite

ADDITIONAL READINGS

Jean-Luc Nancy, “Myth, Interrupted” in The Inoperative Community (1986) Jean-Luc-Nancy-Myth-Interrupted-2

pdf of Ludger Hovestadt, “„A Fantastic Genealogy of the Printable” in: V.Bühlmann, L.Hovestadt, Printed Physics, Metalithikum I, Springer 2012. PRINTED PHYSICS_Hovestadt

SCHEDULE BY WEEKS (weekly materials to prepare):

OCTOBER 14 // Theory of the Quasi-Object (Michel Serres, from: Parasite 1980, p. 224-34).

OCTOBER 28 // Potentiality and Virtuality (Quentin Meillassoux, from: The Speculative Turn, p. 224-36).

NOVEMBER 4 // Reflections on Etienne Souriau’s Les différents modes d’existence (Bruno Latour, from: The Speculative Turn, p. 304-33).

NOVEMBER 11 // Drafting the Inhuman: Conjectures on Capitalism and Organic Necrocracy (Reza Negarestani, from: The Speculative Turn, p. 182-201).

NOVEMBER 18 // The Ontic Principle: Outline of an Object-Oriented Ontology (Levi R. Bryant, from: The Speculative Turn, p. 261-278).

NOVEMBER 25 // The Generic as Predicate and Constant: Non-Philosophy and Materialism (François Laruelle, from: The Speculative Turn, p. 237-260).

DECEMBER 2 // MANIFESTO for an Accelerationist Politics (Alex Williams and Nick Srnicek) and MANIFESTO Xenofeminism. For a Politics of Alienation (laboria kubonics)

FINAL PAPERS due to January 15th (extended)

1-2 pages, on one of the texts discussed throughout the semester. State how you make sense of it.

please email to: buehlmann@arch.ethz.ch and add „Bestimmende Diskurse TU Wien“ in the Betreff line.

Reasoning, argumentation, and »the object« maintain a multiplicitous and unsettled relation within the paradigm of computational modeling: computational models are equally reflective as they are projective, equally analytical as they are synthetic. How to approach this situation? French philosopher Michel Serres has suggested »a communicational apriori« to representation and modeling. In this seminar we will get familiar with Serres’ philosophy of what he calls »the transcendental objective«, and we will explore what it might mean to »argue« and to »reason« with concepts that are, as he suggests, to be conceived of as spectrums rather than forms. We will attempt to extrapolate what this shift in perspective (from form to spectrum) implies for terms central to reasoning like object, type, category, class, generalization, abstraction, scheme, diagram and we will try will ask what might be gained from these considerations for clarifying what is at stake in terms like product, article, one-of-a-kind, generic, etc.

In complementation to this abstract and formal level, we will look at key moments in the history of architectural theory where the architectural object has been addressed in novel manners. We will do so with the speculative assumption that each of those transformations has introduced a novel »apriori« for reasoning in architecture. As preliminary and tentative suggestions e.g.: Vitruv and the »negotiation apriori«, Alberti and the »representation apriori«, Corbusier and the »mobility apriori«.

We will try to identify diverse instances of »computational objects« on various scales in contemporary architecture, and compile them in a lexicon. Every student will choose one such »object« and explore in short presentations to the class, with several iterations over the semester, how it might be addressed best – such that a »reasonable« »argument« can be built around it. The elaboration of a suitable format for such an argument is an overall objective of this course.

FINAL EXAM // As the final work with which students conclude and earn credits for this seminar, they will build a story of their “Argument” around a computational object of their choice.

COURSE MATERIALS // A selection of articles, book excerpts and online lecture will be provided. The weekly preparation of about 20-30 pages will be expected for the participation in the seminar. The lecture class “The computational object in design and in philosophy” will be largely complementary to this seminar; participation in the lecture course is highly recommended, yet not mandatory.

Michel Serres, The Parasite (2007 [1980]) The Parasite

Jean-Luc Nancy, “Myth, Interrupted” (The Inoperative Community, 1986) Jean-Luc-Nancy-Myth-Interrupted-2

Assignment for Nov 10: see doc here: Assignment for Nov 10

GUESTS // There will be several guest lectures from post-graduate researchers associated with the applied virtuality theory-lab at the CAAD Chair, Institute for Information Technology in Architecture ITA, ETH Zurich. They will present the “computational objects” at stake in a diverse range of architectural research fields. http://www.appliedvirtualitylab.wordpress.com // www.caad.arch.ethz.ch

The Summer 2015 PHD colloquium just ended, where we read Zalameo’s book together with Robert Blanché’s “L’Axiomatique” (read the French version, as the English translation just omitted the two crucial chapters on the implications of his discussion for science and philosophy at large!) and the latest work by Elias Zafiris on a Geometry of Spectra and the role of cryptography at work in algebra.

A great review and a further putting-in-context-and-outlook of the key themes and intents in Fernando Zalamea’s book “Synthetic philosophy, contemporary mathematics”. By Giuseppe Longo: http://www.di.ens.fr/users/longo/files/PhilosophyAndCognition/Review-Zalamea-Grothendieck.pdf

Anke Hennig

Central Saint Martins, University of the Arts, London

April 16 2015 2 pm

CAAD ETH Zürich

Building HPZ

John von Neumann Weg 9

Floor F

8093 Zürich

Literary Communication: Is there such a thing as literary information?

A contemporary example of the interaction between speculation and poetry is provided by an apparatus built by Peter Dittmer in 2006. The apparatus contains the linguistic codes for dialogue and learns by conversing with people; it is called the Amme (German for ‘wet nurse’) in reference to a procedure whereby the dialogues end either when the participant so chooses or when the Amme tips over a glass of milk.

In my talk I would like to detail the model of literary communication exemplified in the Amme. I will draw on structuralist (Roman Jakobson) and semiotic (Umberto Eco) models and will demonstrate why those models are doomed to fail that use only the message as their basic unit of communication. Instead both the Amme and poetic speculation rely on the grammar of language, taking failure as their starting points.

I would also like to sketch out an alternative tradition of literary interaction, one exemplified by Fedor Dostoevsky’s poetic ontology (Valeri Podoroga) and the speculative poetics of Mallarme’s Un coup de dés jamais n’abolira le hasard as deciphered by Quentin Meillassoux.

Anke Hennig teaches at Central Saint Martins, University of the Arts, London. Her research interests lie in the poetics of Russian Formalism, the politics of Russian avant-garde media, the aesthetics of totalitarianism and in contemporary theory. In addition to numerous articles, she has also edited an anthology of Russian avant-garde texts (Über die Dinge, 2010). Her recent publications have addressed the chronotopology of cinematic fiction, the present-tense novel, and speculative poetics. She is the author of Sowjetische Kinodramaturgie (2010) and, in cooperation with Armen Avanessian, co-author of Poetika nastoiashego vremeni (2014, Russian translation of Präsens. Poetik eines Tempus, Zurich, 2012) and of Metanoia. Speculative Ontology of Language (Metanoia. Spekulative Ontologie der Sprache, Berlin, 2014).

Forthcoming from Birkhäuser Vienna, applied virtuality book series Vol. 6 (Spring 2015).

By Ludger Hovestadt, Vera Bühlmann, with Sebastian Michael, Diana Alvarez-Marin, Miro Roman

from the preamble:

Orlando – figment of the imagination, ideal and idol and fallible in every way conceivable but flawless in the eye of the beholder – is given to the world perfectly formed by the gods, themselves constructs of the human endeavour to conquer the unknowable and unknown. Timeless, ageless, and deriving immense powers mostly from an indomitable spirit paired with an enquiring mind, Orlando is all human, all humanity, all humility and all pride: an articulation of the embodied consciousness we may call the experience of being alive. Not good or bad, nor beyond the pale is Orlando, Orlando is wonder and discovery and surprise; and strife for self and self-knowledge and hunger for connections that mean something; and need for identity, desire for the loss of self and urge for survival; and yearning for the tender release that is death and fear of the violent crash into the absence of life that is dying. And aching for a place in history and undoing that history bit by bit. And invention, creation, as much as destruction. And cruelty and kindness and the duality of all things polar and their fusion. And the idea of being itself. (Never even mind religion and statehood and status and tribe and the blood ties that bind and sin and redemption or even forgiveness.) Orlando is all made up which is why Orlando is real, and Orlando, of course, is ancient as much as Orlando is new. Orlando is charged by the gods – subject as they are to their own whims and fancies and with wisdom endowed no more and no less than we can conceive – to embark on a quest to The City. And so, as we go to The City, our protagonist shall be Orlando…

from the book cover:

We are all nomads

native to the universe.

This is a stage play,

a narrative about us on the planet.

About how we relate to each other

and to the Great Masters among us.

Welcome to The City.

The views are wide open and bright.

Cities are powerful and challenging.

The heights are lofty the abysses are deep.

Take a seat!

Here’s the setting: A planet.

The generic city.

And 100% urbanism.

Raise the curtain!

Everything is connected.

Everything imparts everything.

The self and the other.

Good and evil.

Adland perfection to bad news provocation.

The burning pain of aching souls versus the purity of nature.

Catastrophe and salvation circling each other forever in their merry-go-round.

A Venetian Carnival: Masks, murder, love, perfidy and beauty.

What should I do,

if I am capable of anything

but have no idea what to do?

A Quantum City invites you to tap into the wealth of indexes belonging to our world. You get introduced to Orlando, a person with no noteworthy qualities, nor any particular properties: a human being who has not yet travelled. And it’s because of this that Orlando is singled out by the gods. He sets sail from Crete towards Athens in 320 BCE, hoping to find evidence of perfection. Throughout the book you follow him on his Odyssey through Western civilisation; though Orlando never quite ends up where she intended to go. And yet, by the time she arrives in the New York of the 1960s, all the decisions that have been made must be called hers. Orlando’s adventure is to challenge the collective origin of intellectual nature. In doing so, Orlando becomes neither an authoritarian functionary, nor a restless activist, nor a comfortable member of a bourgeoisie, but a citizen of the digital age, a Quantum Citizen.

This is not a book as you might expect. It doesn’t offer a theory about cities; rather it speaks of any theory. It is not engaged in solving problems, but it is outraged at the kind of stupidity that cultivates ignorance, at the oppressive and anonymous demand that any solid formulation of a problem should be simple. And above all it takes you onto a journey to (re-)discover The City…

A joint research project by:

The Future Cities Lab, NUS and ETH, Singapore

Research Blog with working materials: http://blogs.ethz.ch/prespecific/

Computational modeling in architecture based on a sheaf-theoretic, quantum-semiotic measurement framework

Excerpt from the abstract to a joint research grant proposal for a cooperation with CPNS Center for Philosophy and the Natural Sciences at California State University, Sacramento (freshly submitted, and still in the processes of consideration):

Today, the architectural model and the built building tend to be both equally much regarded as ‘models’: the omnipresence of images seems to make it superfluous that the ‘manners of appearing’ demands the physical reality as a kind of proof. Thereby, the model is not enriched, it loses its very character: namely a particular kind of ‚speculative potency’.

(Werner Oechslin, »Das Architekturmodell – Idea Materialis«, in: Die Medien und die Architektur. Hg. von Wolfgang Sonne, Berlin, München, Deutscher Kunstverlag, 2011, S. 131-155 (my own translation from German, VB))

Models are in demand where abstract ideas play a part and need to be rationalized and communicated. The legacy of architectural models is in closer familiarity with method – with mathesis – than with a modern understanding of theory as a framework for explanation (Werner Oechslin). Its entire reason of existence is to lend itself for active communication in media res, not merely as a reflective explanation post rem or as a normative prescription ante rem. It is precisely this character of modesty, openness to speculation coupled with a firm sobriety towards all too fantastical flights of fancy that seems to have come out of fashion and – as some argue (e.g. Mario Carpo) – out of service within the contemporary paradigm of digital modeling and its fascination for a computer graphically induced Virtual Reality: the omnipresence of images seems to make the notion of the model, with its in media res character, superfluous. This project shares the view of Werner Oechslin who insists that this is not a gain, but an impoverishment for architecture. It pursues the following idea of how this legacy may be continued: it will explore the peculiarly »manifest« and »physical« character of a computational model in terms of recent innovations in quantum information theory and its underlying topological structure, and the manifestation of this structure in solid state physics. We intend to translate mathematically sophisticated but state of the art procedures from quantum physics into the corpus of architectural categories. Our proposition is to introduce »quantum-geometrical spectrums« and »topological phases« into the discourse. This entails that we introduce notions of code, signals, and their constitutive cryptological/analytical character into architectural theory. Like this, we intend to work towards a quantum semiotic understanding of measurement for 21st century architectural theory. The formalism for this quantum semiotic measurement framework will be grounded in a topological interpretation of quantum theory which applies sheaf theoretic topology. The architectonic perspective upon this quantum semiotic measurement framework will be grounded in a materialist informatics perspective on code, and a media theoretic interpretation of the role of encryption/decipherment in information technology. Both the formalism and the architectonic perspective will be translated to and grounded in the field of computational modeling in architecture.

The value of this basic research to the larger academic public is that it promises to contribute to the development of an understanding of theory that does not fall back into structuralist frameworks (even if by deferral or negation of them, such as poststructuralism). Our proposition is that the predominancy of linearity (even if complexly intertwined) can be replaced with a predominancy of spectrums. This allows us to think networks not as derivatives of linearity, but the other way around: linear connections can be considered as contingent renderings out of concrete, yet quantum-geometrically indefinite, potentia. In architecture, this means that the more or less latent totalitarian genericness of the rapidly spreading practice of »parametricism« can be checked, cultivated and controlled, while appropriating and affirming the digital means on which it works.

With CPNS, we share a common interest in developing a notion of architectonic modeling which we call “Digital Lineamenta”, based on a sheave-theoretic, quantum-semiotic measurement framework.

Since the lines separating philosophy and science have all but vanished with respect to recent explorations of fundamental questions (e.g., string theory, multiverse cosmologies, complexity-emergence theories, the nature of mind, etc…), the modern breakdown of ‘natural philosophy’ into the divorced partners ‘philosophy’ and ‘science’ must be rigorously re-examined. (Michael Epperson, Founder and Head of CPNS)

The Center for Philosophy and the Natural Sciences, in affiliation with the College of Natural Sciences and Mathematics at California State University Sacramento and the Institute of Mathematics at the University of Athens, Greece, engages in research and scholarship that explores the philosophical implications underlying recent innovations in contemporary science, including those occurring in the areas of quantum physics, cosmology, and the study of complex adaptive systems. This exploration is, in part, a speculative philosophical enterprise intended to contribute to the framework of a suitable bridge by which scientific and philosophical concepts might not only be cross-joined, but mutually supported.

In addition to our research, teaching, and publication initiatives, our mission is to foster and enhance the understanding of modern science and its philosophical, cultural, and social implications. Our work in this regard extends beyond the scholarly community and into the arena of public discourse and public policy. Topics will include the role of science and technology in society, environmental science and policy, bioethics, science education, and other practical applications.

“The Society for the Study of Biopolitical Futures locates itself at the nexus where canonical biopolitical thought needs to be renovated and rejuvenated because it can no longer adequately address the issues and questions—of life and the living, of biopower, of the nature of the political itself—that biopolitical thought itself has raised.”

– Cary Wolfe, co-convenor

ALIVE: Advancements in Adaptive Architecture

edited by Manuel Kretzer and Ludger Hovestadt,

is out and can now be ordered via Amazon.

a collection of essays by leading researchers and practitioners:

Philip Beesley, Jason Bruges, Nicola Burggraf, Vera Bühlmann, Carole Collet, Martina Decker, Stefan Dulman, Alex Haw, Ludger Hovestadt, Tomasz Jaskiewicz, Branko Kolarevic, Manuel Kretzer, Oliver David Krieg, Areti Markopoulou, Achim Menges, Aurélie Mossé, Kas Oosterhuis, Claudia Pasquero, Marco Poletto, Steffen Reichert, Jose Sanchez, John Sarik

Applied Virtuality Book Series, Vol. 8

ISBN 978–3–99043–667–7

In times where the very concept of ‘nature’ is questioned not only in its philosophical dimension, but in the core of its biological materiality, we need to reconsider the interrelations between architecture and nature. This not only applies to strategies on environmental responsibility but equally on anticipatory human behavior, transient occupation and cultural or demographic variety. To address these challenges this book proposes to embrace the unknown and cultivate the architectural discipline towards an integrated, co-operative and cross-disciplinary practice that responds to natural evolution through more than formal imitation. It unravels compelling innovative and forward-thinking design narratives by leading international practitioners and researchers who investigate novel associations between architecture, nature and humanity for a future, alive architecture. Structured around the three closely cross-linked core themes “bioinspiration,” “materiability,” and “intelligence” the book engages with the starting point of an emerging new design field, where the symbiosis of physics, biology, computing and design promises the redefinition of what we call architecture today.

The Centre for Expanded Poetics is a creative research laboratory for the interdisciplinary study of structure, form, and fabrication. Initiated in 2014, the Centre for Expanded Poetics is based in the Department of English at Concordia University, Montréal.

PROGRAM OVERVIEW

This MAS class is a full-time one-year interdisciplinary class of about 12 graduate students interested in research on the next level of Computer Aided Architectural Design. This class contains 7 modules in theory, in basic skills about theory in technology and architecture, programming, electronics and CNC production of architectural artifacts. The main interest of the research is the reflection on the potentiality of the upcoming technologies for future architecture. The class starts on an abstract theoretical and philosophical level and ends in exercises in designing concepts of future architecture on the so called symbolic level.

WHAT’S NEXT?

Today, information technology is ubiquitous. Most architects have a self-taught working knowledge of visualisation and computer-aided modelling techniques. In some places, there are specialised technical programmes, especially in the areas of parametric design and experimental computer-generated production. This specialist knowledge is not sufficient, however, to keep track of the medial, technical, organisational, economical and political developments in architecture. Information technology has become a driving force in every sphere of activity for architects. But these developments are as yet badly understood, and so their interpretation is narrow and the architectural landscape diffuse.

This programme is directed at architects, designers and creative people. It offers, for the first time, not technical specialisation but architectural integration on a higher technical level. It conveys profound insights into a variety of technical areas and prompts theoretical reflection as well as promoting an independent personal stance.

The programme is demanding. Technologies are becoming ever simpler and more accessible, but defining an individual position for an architect is becoming more and more difficult. We offer no formulas or solutions. We mistrust the attitude, taken by MIT for example, that popularises, and in doing so naturalises, technology. This, to our minds, amounts to a positioning for power by way of simplification: complexities are being externalised. We believe that this is not enough: technological creation has to be complemented by expertise, not just in technology, but also in creation.

FULL INFORMATION VISIT THE CAAD WEBSITE AND HAVE A LOOK AT THE PRINT DOCUMENTATION

ARCHITECTS REVISITED

“The second theory module will revisit different topoi of” “architectural theory. The students will work out conceptu” “al schemas, which will allow them to compare different positions of architectural theory. They will proceed by case studies for example on Palladio’s approaches to spatial grammar and syntax, on the cosmic scope of French Revolutionary Architecture, on Durant’s rationalization as well as on more contemporary approaches like the machine à habiter (le Corbusier), The Architecture of Well-Tempered Environment (Reyner Banham), Mechanization Takes Command (Sigfried Giedion) or more recent approaches like parametricism (studio Zaha Hadid), Junkspace (Rem Koolhaas), or The Function of Form (Farshid Moussavi)” “a.o.” The students approaches in analytically formalizing these case studies will be prepared for synthesis and modalization. They will learn how to make these topoi more readily accessible and reconstructible by a sort of “conceptual cross-breeding” of these approaches. In a final exercise, each student will take one approach and reformulate it according to his or her own attitude, or to a fictitious attitude especially conceived and characterized for this occasion. Like this, the students are asked to produce and represent their own written manifestos. The module will start with recapitulating the achievements of the first theory module: what is at stake in the concepts of an architectonics of growth and a general theory of stratification? What is the relevancy of concepts such as the plane of consistency, the abstract machine, or double articulation regarding the power of contemporary information technology and the design space that goes along with this kind of technology and infrastructure?

Keeping these aspects in mind, the students will be introduced to a comparatistic way of engaging with architectural history and theory. They will analyze what kind of values certain theories have regarded as elementary, what emphasis have been put where in different theoretic edifices, and what kind of schemes and concepts they have proposed as mediating between these dimensions. Furthermore they will look at how the technological conditions predominant for different times reveal their impact in particular architectural manifestos and theoretical models. Especially, we will be concerned with how the different numerical spaces incorporated in the respective technological paradigms allow for different kinds of conception and construction principles, and also different paradigms of theoretical reflection.

The students will be trained in developing a sense of distinction and comparison between the spaces of potentials and constraints that different “renderings” of such construction principles allow as a design space for architecture. A great emphasis of the course will lie on analyzing the role of technology for architectural theories, as well as the different attitudes taken towards technology therein.

LIVING IN A WORLD OF ABUNDANT POTENTIALS

The first module asks about the use and the possibility of a theory for architecture, with a specific application to and perspective on CAAD. We will be concerned with the relation between architectonics as a methodological, philosophical frame of reference and its application in architectural practice, both from a historical as well as from a structural point of view. We will especially look at the role technology plays in that relation. Different from mechanical technology which operates on the substrate of physical forces, information technology takes as its substrate information. From an architectonic point of view, how can we orientate ourselves when constructing within the symbolic and its potentiality?

The rising importance of concepts like network, field, stratum and plateau a.o. point out that we are learning to maintain a different relation with geometry and quantification, and the respective socio-political forms of organization in space and time (territorialization/ deterritorialization). On a methodological level, the possibility of synthesizing series and constructing sequences as genuinely analytical elements point to the possibility of an abstraction from the geometrical elements and the mechanical methods of aligning them. The students will be introduced to the larger problematics behind the profound changes that are characteristic for our time, as well as to some key concepts and methods for approaching and dealing with them.

The introduction into these backgrounds will help the students to develop an own position and attitude as a future architect. These embedding concepts are key for learning how to design and construct within the space of abundant potentials that the symbolic is. A great emphasis of the course will lie on training the students in a kind of architectonic close reading of demanding texts. Furthermore they will re-visit conceptual elements of their daily practice such as a plan, a line, a surface from a fresh perspective. In a final exercise the students will work out a manifesto for a general architectonics for narrative infrastructures within the symbolic. The results will be presented to the CAAD group at ETH, as well as to a final guest critic.

TIME AND SPACE ENGENDERED BY ARTIFACTS: ARTIFACTS AS SPECIFYING OPERATORS

Artefacts mobilize spaces‘ and times‘ uniformity into an open scope and infinitesimal range of possible arrangements, foldings, compartimentability. With a non-romanticizing eye, we want to look at spaces of intense experience, under the following methodological assumptions: Grammar provides the possibility structure for what can be expressed in language. We will look specifically at two aspects: grammatical cases and articles. While the latter determine the definiteness or indexicality of nouns (a, this, none etc), cases provide the verbs with a voice (passive, active, medium), make possible subjects and objects of happenings distinguishable and relateable in a manner of ways (nominative, dative, instrumental, etc), and are capable of expressing circumstantial information as position or duration in space and time. In this module we will regard urban activities as verbs that engender cases, and we will regard artefacts as the specifying operators of such engendering. It is the goal of the module that each student develops the prototype of a conceptual grammar for expressing activities‘ (verbs‘) excertable inflections on things (nouns). The experimental aspect will be to depart from an unusual perspective: we will especially be interested in specifically urban activities enhanced with technological appliances, like a hairdryer, a rice cooker, a moped, a theater space, etc. We will try to read technology in terms of auxiliary structures for expressing new verbal forms and assume that articulating intensities of experience means expressing the different forms actuality can take.

Understanding the scope of grammatical categories for what can be expressed in a way allowing for generalization, the students will gain a powerful toolbox for articulating their own brands and identities as globally active architects. We will start with an amateur-adventure-tour through the „genesis“ of generalizing and abstracting: in what situations and contexts, and by whom, were ideas like the postulational method for investigation, integration of areas by approximation, the coordination of points, the derivation of functions, symmetry groups, variational calculus and invariants, the generality of algebraic formula, the binary code for symbolic logic, or the distinction between cardinal, ordinal or even ideal numbers invented? Each student will read one chapter (or two short ones) in E.T. Bells Men of Mathematics, The Lives and Achievements of the Great Mathematicians from Zeno to Poincaré (Touchstone 1986 [1937]) and present the core concepts in a few slides. Like this we will gather a catalog of powerful schemata of how to generalize and abstract in an enriching, not in an impoverishing way. We can regard these schemata as tools for learning to think, articulate, and dope situations.

EXAMPLES OF STUDENT WORK

Katia Ageva: Body of knowledge

Diana Alvarez-Marin: AutoMOBILE, Destruction of a myth

Grete Soosula: Reach Through not Nearing

ANY OF ALL

Every building practice needs a smallest unit to bring things into proportion and controllable relations. Traditionally, these units are derived by setting some defined magnitude as elementray. The paradigmatic example is the so called column modules in the Art of Greek Temple Building, from which the ratio can be derived and declinated across scales to put the whole building into proportions. Today we are working with computers, where the elementary units are bits. Bits are the kind of units which render information into a technologically handable quantity. Yet bits are literally speaking a very strange thing – looked at within the language game of quantities, they are finite formal units of determinacity, or pure determinability. Hence we will call them Any-Bits, or Intensive Quantities. How exactly can they be thought to fit within the language game of quantities, magnitudes, numbers? The second theory module will try to get an idea of this context by revisiting different topoi of architectural theory.We will be looking at the different roles and concepts proportion, means, or ratio have played in architecture over time. How were they conceived? derived? applied? altered? legitimated? articulated? The students will proceed by case studies for example on Palladio’s approaches to spatial grammar and syntax, on the cosmic scope of French Revolutionary Architecture, on Durant’s rationalization as well as on more contemporary approaches like the machine à habiter (le Corbusier), The Architecture of Well-Tempered Environment (Reyner Banham), Mechanization Takes Command (Sigfried Giedion) or more recent approaches like Parametricism (studio Zaha Hadid), Junkspace (Rem Koolhaas), or The Function of Form (Farshid Moussavi) a.o. By studying the systematicity-and-proportion question in their architectural heroes, the students reconstruct how The Architectural Whole has been articulated individually by different architects. The students will learn how to make these topoi more readily accessible and reconstructible by a sort of “conceptual cross-breeding” of these approaches. Each student will work with one approach throughout the entire module and frequently present his or her work in progress to the class. As a final result, each student will produce a comic booklet which will together form the MAS 2012 Comix Series ANY OF ALL. The students will be introduced to a diagrammatic and comparatistic way of engaging with architectural history and theory. They will analyze what kind of values certain theories have regarded as elementary, what emphasis have been put where in different theoretic edifices, and what kind of schemes and concepts they have proposed as mediating between these dimensions. Furthermore they will look at how the technological conditions predominant for different times reveal their impact in particular architectural manifestos and theoretical models. Especially, we will be concerned with how the different numerical spaces incorporated in the respective technological paradigms allow for different kinds of conception and construction principles, and also different paradigms of theoretical reflection.

EXAMPLES of Student Work

Diana Alvarez-Marin on Rem Koolhaas

Melina Mezari on Etienne-Louis Boullée

Stylianos Psaltis on Peter Eisenman

BEYOND ENTROPY: WHEN ENERGY BECOMES FORM

The first theory module will introduce you to some large-scale perspectives for thinking about architectural questions specific to our contemporary information age. Beyond following one of the many trends that have emerged within recent years like parametric and algorithmic design, digital tectonics and materialism, we will take a more abstract view on computers and look at information technology from the perspective of infrastructures. Many new tools have been introduced and meanwhile made accessible for architects in a professional, ready-to-use format. Once you can articulate, formulate and communicate what you want to do, as an architect, the technical steps to realize it can (comparatively) easily be organized with the help of CAAD/CAM, open source and open design communities.What turns out to be most challenging today concerns what kinds of questions or horizons we can frame for our experiments, applications or projects. Yet how to give shape to visions of future lifeworlds beyond concrete utopia? The perspective we will be concerned with regards information technology within a generational history of technology. The module will introduce you to this theoretical model, and elaborate its basic arguments. The core assumption thereby is that information technology, different from mechanical technology and its diverse machines and apparatuses, is algebraic and operates on the substrate of an interplay between electricity and information. Our starting point is twofold: information, in a technical sense, can be regarded as the formal abstraction of any content-as-representation into a symbolically operative format (digital code); electricity can be regarded as the abstraction of energy from its concrete material storage into a symbolically operative format. The work information technology is able to carry out is productive within the symbolic, prior to being rendered into materiality. And now consider this: The sun sends 10‘000 times more energy to the earth as all of mankind is currently using. Daily. Photovoltaics puts us in the historically singular position that we need no longer rely on exploiting the natural storages, we can harvest solar energy by tapping into the solar stream directly. Of course it will still hold that the total amount of energy in the universe can be considered constant, yet the amount of energy encapsulated within agricultural growth and urban cultivation is not. It seems hardly an exaggeration to say that this changes the way we relate between culture and nature: with regard to the natural storages, we can harvest, store and integrate an abundant amount of surplus energy into our cultural milieus. Our hypothesis for architecture is that the so-called information age gives rise to emerging forms of solar societies, for which an abundance of clean energy will be characteristic.

Energy can be decoupled from resources. With networked, information technological infrastructures, it turns into a problem of logistics. Thanks to the control of electricity by information technology, energy can be rendered into any form of energy: potential, kinetic, chemical, thermic. We can move things, transform substances, deliver messages, install and operate infrastructures of nearly any kind. But how can we start to think about the forms of living and building in solar societies? There may be, for the time being, no notions of common sense in sight of how to integrate this technological feasibility and genuinely symbolic artificiality into meaningful horizons. All the more is it exciting and important to work conceptually on how to delineate and refer to these novel consistencies that are genuinely symbolic. This first theory module aims at gathering, discussing and refining some crucial vocabulary for talking about our contemporary world.

READINGS

Ludger Hovestadt: A fantastic genealogy of the printable, in V. Bühlmann, L.Hovestadt, Printed Physics, Applied Virtuality Vol. I, Birkhäuser Basel 2011.

Vera Bühlmann: Primary abundance, urban philosophy. Information and the form of actuality in V. Bühlmann, L. Hovestadt, Printed Physics, Applied Virtuality Vol. I, Birkhäuser Basel 2011.

Ludger Hovestadt and Vera Bühlmann, The Power Book. A Radical Pathway from Energy Crisis to Energy Culture (forthcoming, draft version on the server)

Peter Sloterdijk: What happened in the 20th century? Cultural Politics: an International Journal, Volume 3, Number 3, November 2007.

George Bataille: The meaning of general economy. In: The Accursed Share: An Essay On General Economy. Volume I: Consumption

George Bataille: Laws of a general economy. In: The Accursed Share: An Essay On General Economy. Volume I: Consumption.

Henri Lefebvre: From city to urban society in: The urban revolution. University of Minnesota Press 2003.

Gilles Deleuze and Félix Guattari: The Geology of Morals (What does the Earth think it is?) in: A thousand plateaus. Capitalism and Schizophrenia II. Continuum Press, London and New York 2004.

Gilles Deleuze and Félix Guattari: Apparatus of Capture. in: A thousand plateaus. Capitalism and Schizophrenia II. Continuum Press, London and New York 2004.

Gilles Deleuze and Félix Guattari: Micropolitics and Segmentarity. in: A thousand plateaus. Capitalism and Schizophrenia II. Continuum Press, London and New York 2004.

Monday September 27th – Friday October 21st

Seminar meetings daily: 2-6 pm -> this as a rule, be prepared for spontaneous changes (timewise)

Guest lectures: to be announced.

A COLLECTIVE VIDEO FEATURING EXCERPTS FROM THE FINAL EXERCISES:

<p><a href=”https://vimeo.com/31427213″>M1_final video_trailer</a> from <a href=”https://vimeo.com/mascaad”>MAS CAAD</a> on <a href=”https://vimeo.com”>Vimeo</a>.</p>GRAMMARS AND LOCIGS OF FORMS

Since antiquity and throughout different cultures, the theoretical study of forms is bound up with an ideality that can (somehow) equip us with forms as templates to anticipate happenings, estimate consequences, express desires and plan accordingly – in short, to organize our experience of reality in terms of sequences, series, orders. In this module on architecture and theory within the paradigm of information technology we will take a comparatistic perspective on the issue of form and formality, based on the following hypothetical narrative:

Let us assume that the theoretical study of forms originates with people starting to consider forms abstracted from things, from their immediate corporeal presence, and projecting them – theorematically – into a realm of ideality where, for the sake of the hypotheticity at stake in such abstraction, time does not pass, presence is virtual (not corporeal), and nil corruption prevails. Let us assume furthermore that this narrative of origination does not mark a particular moment in time as the beginning or end of an unfolding story of progress in intellection, but that it incorporates a theme which gains actuality time and time again in different manners. In short, we will assume that there are different symbolic constitutions proper to different conceptions of such ideality and formality. To each of these symbolic constitutions corresponds what we will call – in analogy to the Kantian forms of intuition (space and time) – a form of partitioning (eine Urteilsform) which manifests itself in how we think about counting and measuring, by numbers, magnitudes, units. Let us refer to the respective symbolicness of these constitution in analogy to distinctions well established in number theory as a) natural, b) integral (including amendments regarding zero and negatives), c) real (including amendments regarding infinitesimals and transcendentals), d) complex (including amendments regarding imaginaries), and from here we find ourselves, on the further levels of abstraction with the various algebraic number fields, domains, rings, in what we could call – if it were not, inevitably, an intolerable naturalization of the symbolic – a veritable Cambrium Period in the Geology of Symbolic Ideality.

Looking at the constitution and the role of series in architecture M three While our main interest is, of course, in the algebraic constitutions marked as d) and following, we will focus in this module mainly on the symbolic constitutions a) to c), because those are the ones we can study by learning to read the artifacts they have produced. We will try to develop and train a literacy based on studying how the different forms of partitioning allow to govern a subject matter, by establishing different regimes of operation which we will call, allegorically, the geometry of form, the arithmetics of form, and the algebra of form. Within each of these forms of governance, what can be identified as “simple” and as “complex” varies categorically: within a geometry of forms (which we can allegorically relate to Euclid) a set of figures can be considered elements; within an arithmetics of forms (whose allegorical persona may be seen in Descartes) certain values and their ranges are considered absolute; within an algebra of forms it is the domains over which the values range that gain a fundamental role (this last paradigm is too recent to ascribe it an allegorical persona – certainly Galois would be a candidate, but also Euler, Gauss, Cayley, Dirichlet or Dedekind).

We will try to develop and train such a literacy by looking at forms and their symbolic constitutions within the registers of Grammar and of Logics. We will assume that a Grammar of Forms treats forms in terms of inclination and conjugation according to morphological and syntactical rules, and a Logics of Forms treats forms in terms of their nesting and integration into more complex constructions. Schematically speaking, we will assume that Grammars allow for and aim at the differentiation of forms through conservative modulation, and Logics allow for and aims at the differentiation of forms through integrating novel elements, values or ideas. Our core field of empirical investigations to study grammars will be the architectural artifacts of renaissance and classicism, and for studying different logics of forms we will look mainly at artifacts from baroque and historicist architecture.

The concrete task for each student is to choose one “topos of architectural artifacts” – the villa, the garden, the church, the palace, the market place, the street, etc – and work out a plausible series that can account for the differentiation of their topos throughout different regimes of operation, and the respective grammars and logics applied thereby.

Monday January 7th – Friday January 31st 2013 Seminar meetings daily: 3-5 pm -> this as a rule, be prepared for spontaneous changes (timewise)

GUEST WORKSHOP IN EXPERIMENTAL FORMS OF LITERACY:

Unplanned Cinema – metropolis, circulation and method -> Guest lectured by Evan Calder Williams, January 28th – 31st 2013

This workshop centers on three intertwined histories – the cinema, capitalism, and the metropolis – to argue how the same problem structures all three: namely, how can the past, the frozen, the negated, and the accumulated be made to produce anew? And what does that generation leave behind? More prosaically, we will confront the issue of circulation as a way to give an account of economic forms, cultural forms, and the possible, though obscure and unassured, bond between the two. Even more simply, we’ll try to figure out, amongst other things, what the history of the action movie chase scene has to do with the history of city planning. In the workshop, we’ll do two main things: consider an argument together and develop methods of unconventional watching. First, we’ll work through a different approach to thinking about that well-established link between the metropolis and cinema, leaving behind more familiar accounts, such as how the cinema provides reflection on the shocks of experience and spatial dislocation during urbanization. Instead, reading both metropolis and cinema as forms of the generative tension between the static and the animated, we’ll see how the city – the advanced form of capital’s structure of circulation – finds expression in the cinema – the advanced form of reflection on and elaboration of that form – but, crucially, not because a film may take place in a city. The second thing we will do, in order to look at this tension and relation is to closely watch films. More specifically, we will watch not films as individual texts to be decoded but as sets of passages and tendencies, moments in a scattered history of social and spatial experience. For this reason, we’ll consider a set of fragments, genres, recurrent moments, and landscape. The stress will be on developing a method of watching adequate to this emphasis on circulation and style, a method that will attempt to denaturalize the temporal and narrative habits we have: fast-forward, wat ch out of order, takes films as collections of stills, slow things down until we can see them not as reflections on a life but elaborations what has been hiding in plain view.

Evan Calder Williams is a writer. His texts, talks, and performances deal with horror, technique, ornament, capital, and negation. He is the author of Combined and Uneven Apocalypse and Roman Letters. He is a Fulbright Fellow in Film Studies in Italy, where he is writing a dissertation on “anti-political” cinema in the 1970s. He writes for Film Quarterly, Mute, The New Inquiry, and Machete, and at his blog Socialism and/or Barbarism.

GUEST LECTURE BY NATHAN BROWN, UC DAVIS SACRAMENTO CALIFORNIA, USA

on Capitalims

(readings list and video documentation follow soon)

WELCOME TO THE SOLAR SOCIETY – LEARNING TO CONSIDER THE FORM OF ACTUALITY

The first theory module will introduce you to some large-scale perspectives for thinking about architectural questions specific to our contemporary information age. Beyond following one of the many trends that have emerged within recent years, like parametric and algorithmic design, digital tectonics and digital materialism, we will take a more abstract view on computers, computation and computability, and look at information technology from the perspective of its theoretical principles and their practical instantiation in form of the logistical, and increasingly global, infrastructures. As starting point, consider this: The sun sends 10‘000 times more energy to the earth as all of mankind is currently using. Daily. Photovoltaics puts us in the historically singular position that we need no longer rely on exploiting the natural storages, we can harvest solar energy by tapping into the solar stream directly. Of course it will still hold that the total amount of energy in the universe can be considered constant, yet the amount of energy encapsulated within agricultural growth and urban cultivation is not. It seems hardly an exaggeration to say that this changes the way we relate between culture and nature: with regard to the natural storages, we can harvest, store and integrate an abundant amount of surplus energy into our cultural milieus. Our hypothesis for architecture is that the so-called information age gives rise to emerging forms of solar societies, for which an abundance of clean energy will be characteristic. Energy can be decoupled from resources. With networked, information technological infrastructures, it turns into a problem of logistics. Thanks to the control of electricity by information technology, energy can be rendered into any form of energy: potential, kinetic, chemical, thermic. We can move things, transform substances, deliver messages, install and operate infrastructures of nearly any kind. But how can we start to think about the forms of living and building in solar societies? There may be, for the time being, no notions of common sense in sight of how to integrate this technological feasibility and genuinely symbolic artificiality into meaningful horizons. All the more is it exciting and important to work conceptually on how to delineate and refer to these novel consistencies that are genuinely symbolic.

This first theory module aims at gathering, discussing and refining some crucial concepts for talking about our contemporary world. We will be guided by the assumption that the crucial aspect about dealing with concepts is not (primarily) understanding the „right“ meanings or definitions, but learning from them about the acts of abstraction involved in conception (thought). Hence, don‘t be afraid of abstract thought! Information technology operates physically and directly on a symbolic substrate. In this, it differes from any earlier technical Gestalt. This „informal“ substrate consists of a restless interplay between electricity and information. We will try to explore two principle consideration. The first: information, in a technical sense, can be regarded as the formal abstraction of any specific content (as representation) into a not primarily representative, but symbolically operative format (digital code); the second: electricity can be regarded as the abstraction of energy from its concrete material storage into a symbolically operative format. The mark of distinction of information technology is that the work it is able to carry out is productive within the symbolic, prior to being rendered into physical materiality. In other, arithmetical words: multiplication is poorly understood if treated as the repetition of addition. A product cannot be comprehensivley described as a sum; each such description is merely one rendering of a product‘s algebraic/symbolic „identity“ – which can be „articulated“ in many ways on the basis of its possible factorizations. Hence, while considering artifacts, we must assume not only a (historical) autonomy of objects, but also ascribe them a proper integrity.

OUR KEY POINTS OF INTEREST

the rôle of abstraction

the notion of the pre-specific in distinction to genericity, generality, specificity, particularity, individuality, singularity, universality, totality

the means of abstraction

the notion of articulation within the interplay between grammar, logics, mathematics, poetics, technics, mediality

the bodies of abstraction

the notion of artefacts in relation to that of things, facts, or essences

orientation within the world of abstraction

indexing: the here and now, in relation to then and there.

Wednesday Sept. 19th – Friday Oct. 26th Seminar meetings daily: 2.30- approx. 6 pm -> this as a rule, be prepared for spontaneous changes (timewise)

OUR CORE READINGS

Gilles Deleuze and Félix Guattari: The Rhizome, in: A thousand plateaus. Capitalism and Schizophrenia II. Continuum Press, London and New York 2004.

Gilles Deleuze and Félix Guattari: The Geology of Morals (What does the Earth think it is?) in: A thousand plateaus. Capitalism and Schizophrenia II. Continuum Press, London and New York 2004.

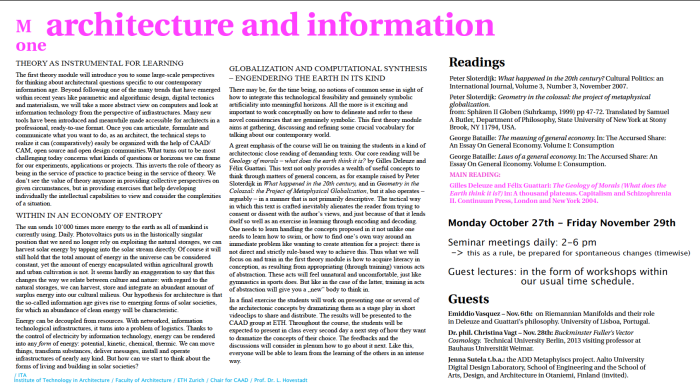

THEORY AS INSTRUMENTAL FOR LEARNING The first theory module will introduce you to some large-scale perspectives for thinking about architectural questions specific to our contemporary information age. Beyond following one of the many trends that have emerged within recent years like parametric and algorithmic design, digital tectonics and materialism, we will take a more abstract view on computers and look at information technology from the perspective of infrastructures. Many new tools have been introduced and meanwhile made accessible for architects in a professional, ready-to-use format. Once you can articulate, formulate and communicate what you want to do, as an architect, the technical steps to realize it can (comparatively) easily be organized with the help of CAAD/ CAM, open source and open design communities.What turns out to be most challenging today concerns what kinds of questions or horizons we can frame for our experiments, applications or projects. This inverts the role of theory as being in the service of practice to practice being in the service of theory. We don‘t see the value of theory anymore in providing collective perspectives on given circumstances, but in providing exercises that help developing individually the intellectual capabilities to view and consider the complexities of a situation. WITHIN IN AN ECONOMY OF ENTROPY The sun sends 10‘000 times more energy to the earth as all of mankind is currently using. Daily. Photovoltaics puts us in the historically singular position that we need no longer rely on exploiting the natural storages, we can harvest solar energy by tapping into the solar stream directly. Of course it will still hold that the total amount of energy in the universe can be considered constant, yet the amount of energy encapsulated within agricultural growth and urban cultivation is not. It seems hardly an exaggeration to say that this changes the way we relate between culture and nature: with regard to the natural storages, we can harvest, store and integrate an abundant amount of surplus energy into our cultural milieus. Our hypothesis for architecture is that the so-called information age gives rise to emerging forms of solar societies, for which an abundance of clean energy will be characteristic. Energy can be decoupled from resources. With networked, information technological infrastructures, it turns into a problem of logistics. Thanks to the control of electricity by information technology, energy can be rendered into any form of energy: potential, kinetic, chemical, thermic. We can move things, transform substances, deliver messages, install and operate infrastructures of nearly any kind. But how can we start to think about the forms of living and building in solar societies? GLOBALIZATION AND COMPUTATIONAL SYNTHESIS – ENGENDERING THE EARTH IN ITS KIND There may be, for the time being, no notions of common sense in sight of how to integrate this technological feasibility and genuinely symbolic artificiality into meaningful horizons. All the more is it exciting and important to work conceptually on how to delineate and refer to these novel consistencies that are genuinely symbolic. This first theory module aims at gathering, discussing and refining some crucial vocabulary for talking about our contemporary world. A great emphasis of the course will lie on training the students in a kind of architectonic close reading of demanding texts. Our core reading will be Geology of morals – what does the earth think it is? by Gilles Deleuze and Félix Guattari. This text not only provides a wealth of useful concepts to think through matters of general concern, as for example raised by Peter Sloterdijk in What happened in the 20th century, and in Geometry in the Colossal: the Project of Metaphysical Globalization, but it also operates – arguably – in a manner that is not primarily descriptive. The tactical way in which this text is crafted inevitably alienates the reader from trying to consent or dissent with the author‘s views, and just because of that it lends itself so well as an exercise in learning through encoding and decoding. One needs to learn handling the concepts proposed in it not unlike one needs to learn how to swim, or how to find one‘s own way around an immediate problem like wanting to create attention for a project: there is not direct and strictly rule-based way to achieve this. Thus what we will focus on and train in the first theory module is how to acquire literacy in conception, as resulting from appropriating (through training) various acts of abstraction. These acts will feel unnatural and uncomfortable, just like gymnastics in sports does. But like in the case of the latter, training in acts of abstraction will give you a „new“ body to think in. In a final exercise the students will work on presenting one or several of the architectonic concepts by dramatizing them as a stage play in short videoclips to share and distribute. The results will be presented to the CAAD group at ETH. Throughout the course, the students will be expected to present in class every second day a next step of how they want to dramatize the concepts of their choice. The feedbacks and the discussions will consider in plenum how to go about it next. Like this, everyone will be able to learn from the learning of the others in an intense way. Monday October 27th – Friday November 29th Seminar meetings daily: 2-6 pm -> this as a rule, be prepared for spontaneous changes (timewise) Guest lectures: in the form of workshops within our usual time schedule: Emiddio Vasquez – Nov. 6th: on Riemannian Manifolds and their role in Deleuze and Guattari‘s philosophy (University of Lisboa, Portugal). Dr. phil. Christina Vagt – Nov. 28th: Buckminster Fuller‘s Vector Cosmology. Technical University Berlin, 2013 visiting professor at Bauhaus Universität Weimar. Readings Peter Sloterdijk: What happened in the 20th century? Cultural Politics: an International Journal, Volume 3, Number 3, November 2007. Peter Sloterdijk: Geometry in the colossal: the project of metaphysical globalization. from: Sphären II Globen (Suhrkamp, 1999) pp 47-72. Translated by Samuel A Butler, Department of Philosophy, State University of New York at Stony Brook, NY 11794, USA. George Bataille: The meaning of general economy. In: The Accursed Share: An Essay On General Economy. Volume I: Consumption George Bataille: Laws of a general economy. In: The Accursed Share: An Essay On General Economy. Volume I: Consumption. MAIN READING: Gilles Deleuze and Félix Guattari: The Geology of Morals (What does the Earth think it is?) in: A thousand plateaus. Capitalism and Schizophrenia II. Continuum Press, London and New York 2004.

EXAMPLE OF A FINAL VIDEO BY JOEL LETKEMANN DRAMATIZING DELEUZE & GUATTARI’S “RHIZOME” AND “GEOLOGY OF MORALS” IN MILLE PLATEAUS, ENTITLED “WE”:

This is the new program for the theory modules I am teaching at the Master of Advanced Architecture Program “Architecture and Information” at CAAD, ETH Zürich.

“Over several centuries, from the Greeks to Kant, a revolution took place in philosophy: the subordination of time to movement was reversed, time ceases to be the measurement of normal movement, it increasingly appears for itself and creates paradoxical movements.“ (Gilles Deleuze, Cinema II, The Time Image)

Our emphasis in this year’s theory course lies on „time”. How can architecture relate to time in a manner that this relation to time informs architecture at large, i.e. not merely the eventual and inevitable aging of houses built, as the retrospective manifestation of architecture’s cultural history. Our hypothesis is the following: if time is no longer subjected to movement, we might learn to see the relative durations of stasis a built house is capable of capturing as being fabricated out of crystals of time. We will assume for such crystals to be constituted by virtually active elements – quantums, minimal units of measurability. In Physics, a quantum is understood as “the minimum amount of any physical entity involved in an interaction“. In likewise manner, we want to explore the postulate that „minimal amounts of any architectural entity involved in an interaction“ can be understood, by attending to crystals of time as the new elements of a new architecture.

The cosmological revolution that time cannot be deduced from the movement of things, but rather constitutes something like a general container for all that ever had or will have an extension in space, was the revolution brought about by Newtonian physics in the 18th century: „We may regard the present state of the universe as the effect of its past and the cause of its future.“ (Pierre Simon Laplace, Essai philosophique sur les probabilités, 1814). For the science and the philosophy of modernity, time has a General Form. Today, some 200 years later, the Quantum Paradigm suggests to contemporary science and philosophy that this assumed general form of time ought to be understood as universal and yet concrete, i.e. as heterogenous and locally singular rather than as homogenous and globally same. Molar processes as we know them from the solidification of matter in crystallization suggest we think about time neither in the terms of continuity nor instantaneity, but how then?

We will cross-read texts from Philosophy, (Astro)chemistry, Mathematics, Communications Engineering, and Cosmology in order to endow the key concepts that demarcate our field of interest with saturation and consistency. Some of these concepts are:

Elements, Molar Volumes, Molecular Bonding, Information, Fields, Frequencies, Phases, Communication Channels, Reciprocity, Ciphers, Encryption and Decryption, Crystals, Cryptography, Quadruple Structures or Double Articulations, Planes and Strata, Faces, Diffraction, Non-Organicity.

We meet every monday for a full day, 9.30 am to 12.00 and 1.00 to 5.30 pm.

We will structure the theory day in 3 thematic blocks à approx. 2 hours each.

In addition, you are expected to work every weekday a minimum of 2h for preparing readings, small presentations, etc. The assignments are communicated week by week, as we go on.

Naturally, the program is subject to possible adaptation and change.

APRIL 28-30 2015

Tuesday April 28th 2-6 pm

Wednesday April 29th 2-6 pm

Thursday April 30th 2-6 pm

ETHZ

DARCH CAAD

BUILDING HPZ, FLOOR F

EVERYONE WELCOME TO ATTEND ! write an email to buehlmann (at) arch.ethz.ch

Natural Communication

Information theoretic processes of communication take place via a bidirectional modelling scheme of encoding and decoding information from one domain to another. Contrary to common belief, these communicative procedures of information transfer are not direct but always follow a particular type of a dynamically adjusted design suited to both, the characteristics of the involved domains, and the nature of the information to be exchanged or transferred. The basic attributes of this natural design may be summarized as follows: I. Information flow follows a circulatory pattern between the involved domains consisting of cycles of encoding/decoding or encrypting/decrypting information. II. The involved domains stand in a reciprocal relation to each other with respect to the circulation pattern of information flow. III. The information flow can be metaphorically thought of in terms of elastic cords binding the involved domains by means of a network of bidirectional connections. IV. Reciprocal encoding and decoding processes making up the information flow are of a categorial nature and always effected through universals of a non-spatiotemporal nature. V. The naturality of the design, meaning the non-dependence on ad hoc choices and conventions, is characterized by concrete invariance properties of the information flow itself in relation to the involved domains. VI. The pattern of information flow is not rigid. On the contrary, it is dynamically adjustable within the limits imposed by the invariance properties and can be metaphorically thought of in terms of processes of crystallization of the information flow. The above briefly described attributes characterizing the natural design of information exchange or transformation processes of communication can be formulated in a precise manner mathematically in the language of category theory, and in particular, in terms of the notion of categorial adjunctions. Category theory was born out of the discipline of homological algebra, which in turn traces its genesis in the merging of universal algebra with topology. The decisive moment in the history of mathematics, when for the first time the design pattern of information flow between different domains has been abstracted in structural and not merely arithmetical terms, has been the conception of Galois theory. The realization that the natural design of a communicative information flow follows universal rules required the recently conceived higher abstractions of category theory for a precise formulation. Notwithstanding this fact, this universal bidirectional modeling schema of information encoding/decoding is barely known outside of the arena of pure technical mathematics, and this is something that has to be remedied urgently in the very near future. The purpose of this course is precisely the familiarization and conceptual understanding of the basic notions involved in this schema.

Bernhard Riemann’s 1854 habilitation thesis ‘On the hypotheses which lie at the foundation of geometry’ ought to be read as it was originally intended to: a critique of measure pointing at mathematicians and philosophers alike. Taking Deleuze’s appropriation of Riemann as a point of departure in Bergsonism, it is important to highlight and further develop the critical implications of Riemannian manifolds.

Considering that manifolds were not intended to be simply mathematical structures – in fact, Riemann exemplified them in the physical domain as colour, and in general, qualities – the concept of manifolds can and should be extended to different fields. The second day of our meeting will be an attempt to explore those possibilities by looking at dynamic systems (self-organized criticality) and physical processes (1/f noise). The aim will be to conceive of time as fluctuating, but without trivializing it. Perhaps if we were to experience it directly, we would have to rescale it to our own time metric, which points for exploration towards the infinitesimal. This could be what Curtis Roads means by ‘microsound’, which is why sound will be manipulated, stretched and granulated. We will problematize Fourier series and reflect on the idealized sine tone.

These shifts from different fields will necessarily raise questions concerning the translatability of structures (i.e. in parametric design) and their creative potential in transdisciplinary practices.

Emiddio Vasquez holds an MAS degree in Mathematical Physics and Philosophy.

Part 1

Part 2

Part 3

Here is Emiddio’s lecture from 2013: Riemannian Manifolds and their role in Deleuze and Guattari‘s philosophy

The open seminar is co-curated by Vera Bühlmann (ETH Zurich) and Erin K Stapleton (Kingston University, London). It is a laboratory for applied virtuality event, organized by the Chair for Computer Aided Architectural Design at the Swiss Federal Institute of Technology Zurich, ETH. The event will take place at Wirtschaft Neumarkt and Cabaret Voltaire, Zurich, on November 26th – 27th 2014. There will be papers delivered by invited speakers, and each will be 30 minutes long, with a 30 minutes discussion to follow.

Wednesday November 26th at Wirtschaft Neumarkt

Thursday November 27th at Cabaret Voltaire

The space for audience is limited, but the event is open for anyone who is interested. Please refer to the event’s website for registration.

Download the poster here: sunsinversePosterA2-2.

Abstract

In this seminar, we focus on the role of speculation in theory and philosophy in a historical manner, yet with clear inclinations to our contemporary present and the currently exploding interest in speculative methods in academic practice and research contexts. In order to expand our positions beyond strictly pragmatist considerations, we locate the role of speculation between two schematically accentuated poles: the first approaches the role of speculation in the (arguably inevitable) dogmatisms of anthropological cosmopolitics, operating within domains of sufficient reason; while the second approaches the role of speculation within a rational cosmology, where it (arguably inevitably) is prone to engender what Kant terms “the antinomies” of pure reason, and which he sees as inherent to all systematic approaches to the cosmos.

We will align these positions in a matrixical manner with vectors of (contemporary) characterizations of the sun written by such thinkers such as Henri Poincaré, Georges Bataille, Jean-François Lyotard, Gilles Deleuze, and Michel Serres. Based on these characterizations we will attempt to profile different understandings of economy. Two fields will serve as ‚case-situations‘ for thinking about the ‚energetic-material‘ role of the sun: (1) Artificial Photosynthesis and its consequences for how we can think about food and energy, and (2) ‚metabolizing‘ algorithms such as Google’s PageRank which introduce circuitous units for measuring the relevancy of data in a quasi-climatic sense, and the peculiar economy of data’s value the emergence of which we are presently witnessing – as an economy that is being addressed within the registers of ‚biopolitics‘ and/or ‚cognitive capitalism‘.

Speakers

Terence Blake (Agent Swarm blog)

From Inversion to Many Versions. Feyerabend’s Philosophy of Nature.

Paul Feyerabend is often associated with a destructive criticism leading to an anarchism that flouts every rule and a relativism that treats all opinions as equal. This negative stereotype is based on ignorance and rumour rather than on any real engagement with his texts. Feyerabend’s work from beginning to end turns around problems of ontology and realism, culminating in the outlines of a sophisticated form of pluralist realism. This largely unknown ontological turn taken by Feyerabend in the last decade of his life was based on four strands of argument: historical considerations, cosmological criticism, complementarity, and the primacy of democracy.

Vera Bühlmann (CAAD ETHZ)

The Creative Conservativeness of Computability

Felicity Colman (Manchester School of Art)

Transmission Materialist Informatics and Regimes of Entropy

For this seminar I explore some of the core elements for a practice of creative speculation: the concepts of energy, matter, transmission, and entropy. The practice model is that of the American artist Robert Smithson. In 1971 Smithson proposed that we should compile all the different entropies. This would provide a study of ‘entropology’ (after Claude Levi-Straus described of a post-anthropology). Smithson joked about how ‘wreckage’ is more interesting than ‘structure’ and he proposes the sun and its associated entropic regimes as a methodological process, one that is productive of material systems. As any system is itself subject to change through shifts in informational matter, any computation of a system must take into account the transmission factor, and is thus always subject to not only the entropy of its materiality but the entropic language of its sense as a description. Smithson proposes that between the absurdity of language structure and the virtuality of the 4th dimension ‘a device for unlimited speculation’ is located.

Ref: Felicity Colman. 2006. “Affective entropy.” Angelaki (11, 1) http://www.tandfonline.com/doi/abs/10.1080/09697250600798060#.VHSbPLywHhZ

Ludger Hovestadt (CAAD ETHZ)

Continuing the Modernist Legacy by Reverse Engineering

Jorge Orozco (CAAD ETHZ)

How the PageRank Algorithm Operates Technically

Matteo Pasquinelli (Philosopher, Berlin)

The Computation of Cognitive Capital

Since the times of Smith, Ricardo and Marx, capital is clearly a form of computation. The apparatuses of capital describe by themselves a complex mathematical system. After WWII the numeric dimension of capital has been coupled with the numeric dimension of cybernetics and computing machines, then gradually subsuming also upcoming forms of augmented intelligence. Capital, as a form of accounting, as a form of exterior mnemonic technique, is in itself a form of trans-human intelligence. Cognitive capitalism, specifically, on the basis of all its numeric procedures, from layman’s accounting to sophisticated algotrading, from immaterial labour to scientific research, is an institution of computation. (unfortunately, there is no video available for this lecture).

Johannes Paul Raether (artist, Berlin)

Augmented Embodiments and Identitecture in Capitalist Society

Through the filter of his multiple Potential Identities, the Identitect Johannes Paul Raether will give an introduction to his shizzo-realist avatars and psycho-active institutions that have been crystalizing in evolving experimental framework they call Identitecture. Appearances of figures such as Protektorama, the SmartphoneSangoma and WorldWideWitch as well as Transformella, ReproRevolutionary of the Ovulo-factories, will be presented and reflected, while instances, sites and practices within these Appearances will be discussed for their respective terms and methods. The aim of self-made conceptualisations of proto-academic terms such as ‘Beautified Intervention’, ‘Immersive socio-real Environment’ and ‘Augmented Embodiments’ will be to continuously construct a partial and situated, yet evolving planetary model of identity production. The lecture aims to show vectors of how to dissect from the genre of political art and attempt to travel towards a potential framing of what psycho-realist artistic practice in a capitalist society could entail.

David Schildberger (CAAD ETHZ / ZHAW Wädenswil (Beverage Design))

The Principle of Artificial Photosynthesis Generalized